WhereIsAI

WhereIsAI/UAE-Large-V1

- mteb - sentenceembedding - featureextraction - sentence-transformers - transformers - transformers.js - name: UAE-Large-V1 results: - task...

Model Documentation

Universal AnglE Embedding

📢

WhereIsAI/UAE-Large-V1 is licensed under MIT. Feel free to use it in any scenario.

If you use it for academic papers, you could cite us via 👉 citation info.🤝 Follow us on:

Welcome to using AnglE to train and infer powerful sentence embeddings.

🏆 Achievements

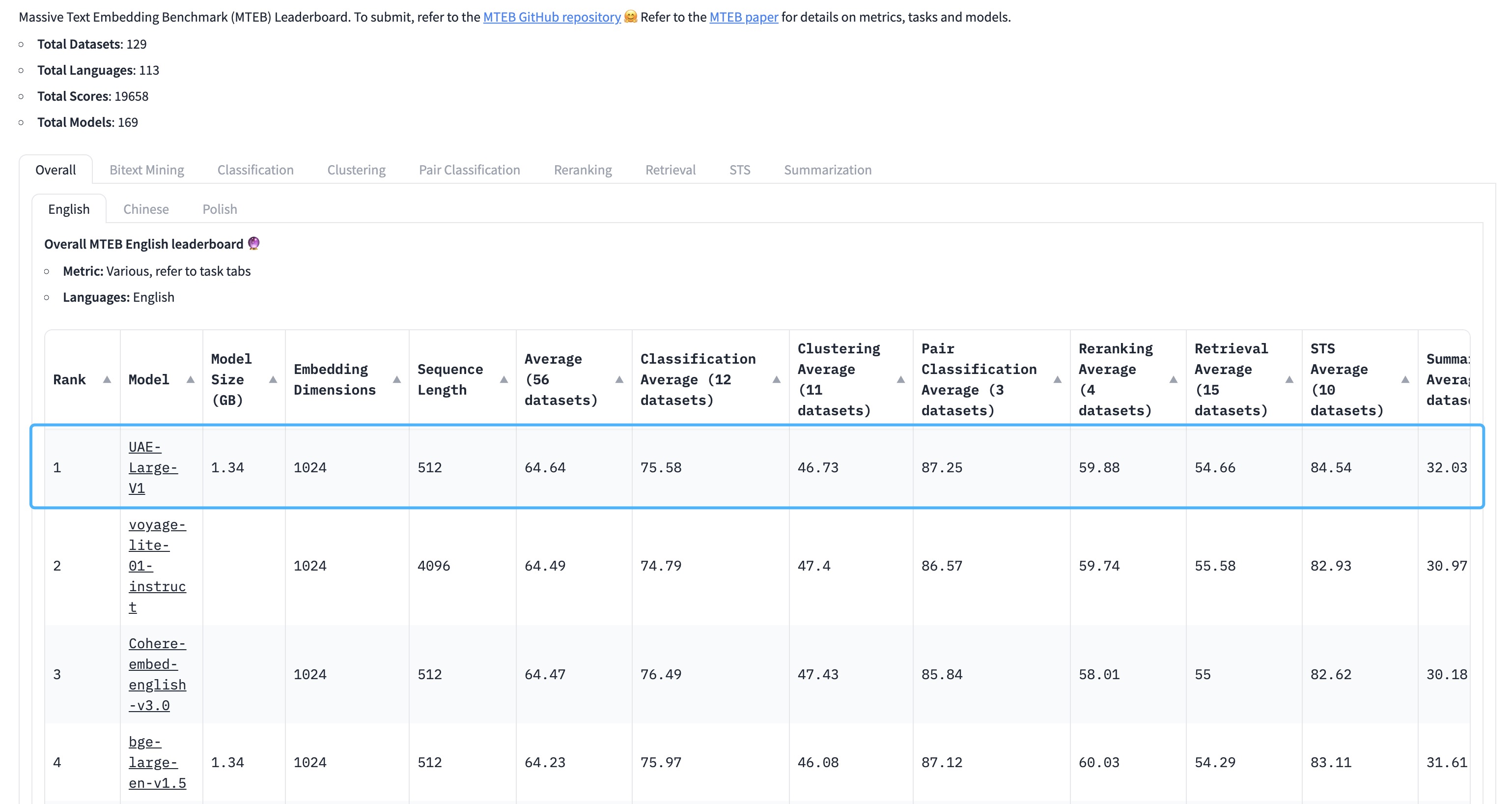

WhereIsAI/UAE-Large-V1 achieves SOTA on the MTEB Leaderboard with an average score of 64.64!

🧑🤝🧑 Siblings:

Usage

1. angle_emb

bash

python -m pip install -U angle-emb

1) Non-Retrieval Tasks

There is no need to specify any prompts.

python

from angle_emb import AnglE

from angle_emb.utils import cosine_similarity

angle = AnglE.from_pretrained('WhereIsAI/UAE-Large-V1', pooling_strategy='cls').cuda()

doc_vecs = angle.encode([

'The weather is great!',

'The weather is very good!',

'i am going to bed'

], normalize_embedding=True)

for i, dv1 in enumerate(doc_vecs):

for dv2 in doc_vecs[i+1:]:

print(cosine_similarity(dv1, dv2))

2) Retrieval Tasks

For retrieval purposes, please use the prompt

Prompts.C for query (not for document).python

from angle_emb import AnglE, Prompts

from angle_emb.utils import cosine_similarity

angle = AnglE.from_pretrained('WhereIsAI/UAE-Large-V1', pooling_strategy='cls').cuda()

qv = angle.encode(Prompts.C.format(text='what is the weather?'))

doc_vecs = angle.encode([

'The weather is great!',

'it is rainy today.',

'i am going to bed'

])

for dv in doc_vecs:

print(cosine_similarity(qv[0], dv))

2. sentence transformer

python

from angle_emb import Prompts

from sentence_transformers import SentenceTransformer

model = SentenceTransformer("WhereIsAI/UAE-Large-V1").cuda()

qv = model.encode(Prompts.C.format(text='what is the weather?'))

doc_vecs = model.encode([

'The weather is great!',

'it is rainy today.',

'i am going to bed'

])

for dv in doc_vecs:

print(1 spatial.distance.cosine(qv, dv))

3. Infinity

Infinity is a MIT licensed server for OpenAI-compatible deployment.

docker run --gpus all -v $PWD/data:/app/.cache -p "7997":"7997" \

michaelf34/infinity:latest \

v2 --model-id WhereIsAI/UAE-Large-V1 --revision "369c368f70f16a613f19f5598d4f12d9f44235d4" --dtype float16 --batch-size 32 --device cuda --engine torch --port 7997

Citation

If you use our pre-trained models, welcome to support us by citing our work:

@article{li2023angle,

title={AnglE-optimized Text Embeddings},

author={Li, Xianming and Li, Jing},

journal={arXiv preprint arXiv:2309.12871},

year={2023}

}

Files & Weights

| Filename | Size | Action |

|---|---|---|

| model.safetensors | 1.25 GB |